Contents Science Lab

In Japanese, human gait (walking appearance) is often expressed by using mimetic words (or “onomatopoeia”) such as “sutasuta” and “yoroyoro”, and many more.

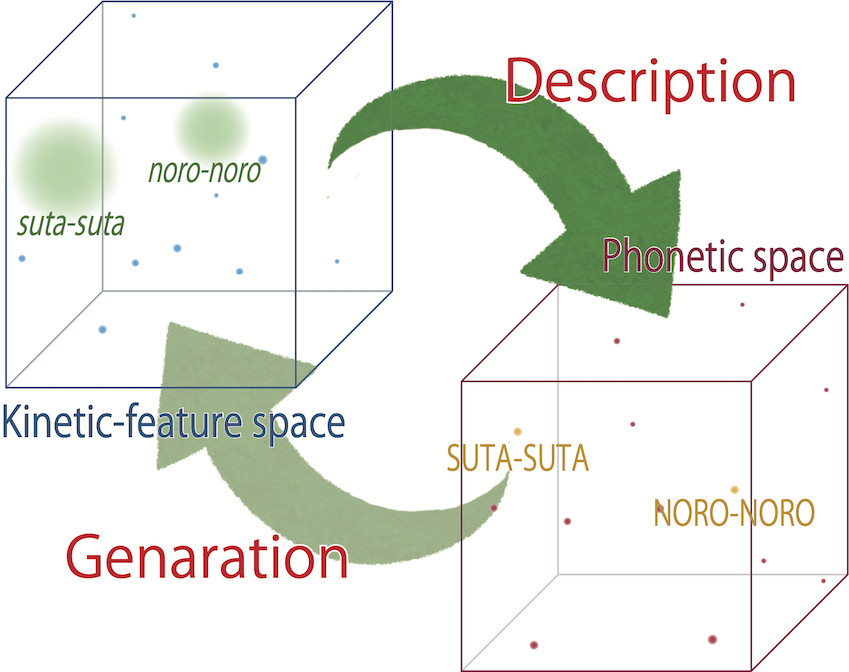

In our research, we aim to build a framework that describes gaits in mimetic words and conversely generates gaits from them. It is desirable that AI which interacts with and assists humans can input and output information that is easy for humans to understand. For that purpose, human senses, textures, etc. must be better analyzed and understood by the machine. It is known that human senses have a co-occurrence relationship between multiple modalities, as represented by the Bouba-Kiki effect (the relationship between phonemes and shapes of objects). We try to model such a relationship for human gaits in order to better describe them like a human would do.

Data-driven modeling of these relationships between modality using an informatics approach not only helps to elucidate the cross-modality of human senses, but also helps computers understand human senses.

We published a dataset for this research: http://www.cs.is.i.nagoya-u.ac.jp/opensource/hoyo/

Post-doctor Researcher (Institutes of Innovation for Future Society)